What Is a Broken Link on a Website?

A large number of factors influence a site's position in search results, one of which is the external and internal link profile. The presence of broken links on the site can worsen both the position and the impression of users.

How to recognize a broken link?

What is a dead link? A broken link (also called a "dead link", "defective link" or "missing link") is a hyperlink that does not work correctly (anymore), either because there is an error in the source code, or the linked page no longer exists. The link target can be either a website or a file. Usually, users who click on a broken link receive an error message with the status code "404 Not Found" or "410 Gone". If a website has too many broken links, this can lead to a decrease in the ranking.

What is a broken link on a website:

- A hyperlink leads to a file or a page that can no longer be reached through the server because it has either been renamed, moved, or deleted altogether.

- The referring domain has been newly registered and is still without content. Links to old URLs consequently disappeared.

- The target page is not accessible because the server on which it is located has network problems or has been rebuilt.

- The domain of the target page has been dissolved or deregistered. This can have several causes: either the operator abandoned it or failed to pay the hosting fees

- The source code of the link is incorrect. The usual reasons for this are:

- Typos in the domain name or source code.

- An incorrect extension was used. .html instead of .php or .eu instead of .com, for example.

- The quotation marks were not set in the source code.

To prevent the error 404 it is necessary to regularly check the site for broken links.

Why Broken Links Are Important In SEO?

Broken links are quite a disadvantage for the search engine ranking and lead to devaluations if they occur frequently. A high number of broken links signals to search engines that the website to which these links lead is no longer properly maintained. A search engine always wants to present the most relevant and accurate content to its users in response to their search queries. The probability that a search engine will classify a website as outdated and therefore less relevant increases as a result. The linked websites loses valuable link juice due to broken backlinks.

Therefore, it is a sign of quality when webmasters fix or replace broken links. The crawler will most likely register the positive change during its next visit to the website and include it in the overall ranking. The webmaster can support the process with an XML sitemap, in which the subpages of the website are mapped automatically and always up-to-date. Crawlers such as the Googlebot evaluate XML sitemaps in order to search the web pages of a domain more quickly.

At the same time, broken links can play a role in link-building and become a part of an SEO strategy. The principle is simple - the dead link is replaced by a new one. First, pages that have broken links that lead to external sources are identified. This is done by a keyword search followed by a search for 404 pages. A link checker, like Ahrefs, can help with this. Finally, you write to the webmaster who has these links and draws his attention to the problem, while at the same time offering your own content to which links can be replaced. The important factor for a successful link-building strategy via broken links is high-quality content and the willingness of webmasters to fix the broken link.

How To Find Broken Links?

Dead links can be searched manually, with the help of user complaints or special programs for analysis. Let's take a look at the best methods for analyzing dead links on the site.

Some CMS such as WordPress can be equipped with appropriate plugins that reliably search the broken links. If you discover a broken link while surfing or doing keyword research, you can often determine the original target page via the so-called WayBackMachine or look in the Google Cache if the link recently became dead.

For finding the dead links we suggest using several methods and tools.

Manual Moderation

Manual search is a lengthy process with low efficiency. This method is suitable for resources that have no more than 25-35 web pages. Otherwise, you will spend a huge amount of time searching for links, and you can miss a large number of problems.

Google Search Console

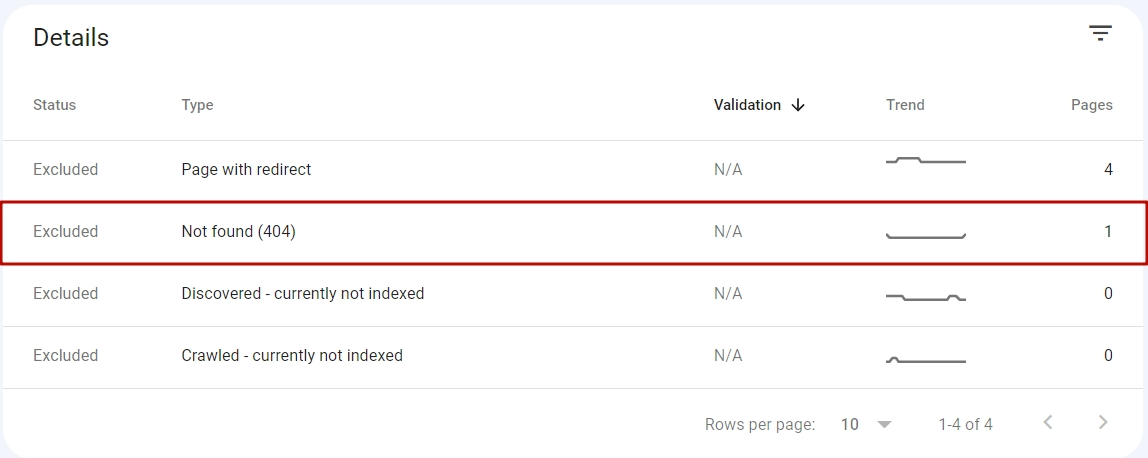

Google Search Console tool can be used to identify 404 pages.

Do the following:

- Open the Google Search Console service, select the "Pages" section;

- Click on "Excluded" and then on "Not found (404)".

After performing these actions, you will see a list of dead links, as well as statistical data on them in the form of charts. Working with Google Search Console automates and simplifies searches and helps not to overlook important problems.

Screaming Frog

This service allows you to scan and get detailed information about the pages of the site. It has a paid version and a free version. The free version allows you to scan 500 pages and is suitable for small sites.

In order to find broken links, you need to specify the domain of the site and start scanning. After that, you need to go to the tab "Response Codes", and select the response code 404 or 410. Next, select the URL you are interested in and go to the tab Inlinks - incoming links. You will see a list of pages that have a link to a non-existent page.

Ahrefs

Ahrefs is often called the best service for SEO promotion. To get access to full functionality you will also have to pay, but there is a free version that lets you test out the tools.

To find dead links using Ahrefs, go to the Site Explorer tab and enter a domain name. Then go to the Outbound Links tab and click on Broken Links. Alternatively, you can use the Site Audit tool. Once it is opened, click on Project, then External Pages, and then HTTP Status Codes. In the list that appeared click on issue 4XX to see all external dead links.

Both Screaming Frog and Ahrefs can be used for Broken Link Building, which we talked about above.

How To Fix Broken Links?

Broken links are unavoidable and you should not be feared of them, but rather monitor your website regularly to find and eliminate them. If the 404 page is the result of an incorrectly entered URL, the problem can be fixed manually. Errors caused by changing the URL structure are treated by setting up a 301 redirect, which is done through a .htaccess file. The ranking of the subpage can be maintained in the search engine. If a user finds the old subpage, it is almost instantly redirected to the new subpage by the 301-redirect. This way, the user finds the desired content instead of ending up in nirvana. You will spend no more than 5-10 minutes to identify the cause and fix the problem, more time-consuming is the process of finding dead links.

One method to avoid broken links is to submit a sitemap in XML format with all available subpages of a domain to search engines. Optionally, a separate sitemap can also be submitted for images only.

Those who engage in link building should also make sure that all subpages of their project are accessible, especially after a relaunch or redesign of the website. Outgoing links should also be checked at regular intervals with regard to broken links.

Why fix dead links?

To keep users and search engines satisfied you have to fix dead links. Search engine crawlers are usually not very happy about web pages with broken links and list them in lower positions in search results pages. So, a frequent occurrence of broken links, of course, can also lead to a devaluation, which is not predictable and can happen because of many other factors.

Users, just like search engines, appreciate it when the content of a website is up-to-date and they can find the information they were looking for after clicking on a link or on another page. Basically, what is good/not good for users is also good/not good for bots.

So if dead links pile up on or to a website, the user experience is affected as well as usability. For search engines such as Google or Bing, dead links mean impairment of the user experience, consequently, the target page is rated negatively.

If dead links on a website are not removed, crawlers will check them again each time the website is visited. For this reason, it can happen that even target pages that no longer exist remain in the index of a search engine. With the help of an XML sitemap, search engines can always be provided with an up-to-date overview of all working links on a domain.

Conclusion

The frequency of link profile checking depends on the type of resource (commercial, informational, blog), the number of pages and backlinks, the frequency of content updates, and other factors. The optimal frequency of checking for small resources is 3-4 times a year, in the case of multi-page and frequently updated sites, the procedure should be carried out at least 5-6 times a year. It is recommended to use webmaster services as well as third-party programs for searching - a comprehensive approach will help to identify the maximum number of problems.